Synthetic Data Generation via Depth Sensor Noise Simulation

Published on December 19, 2017

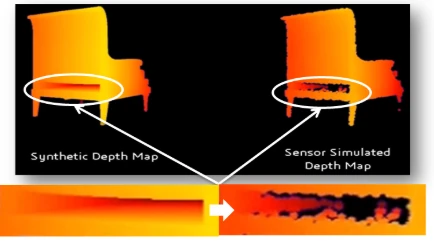

High-quality training data is one of the main limiting factors in depth-based perception systems. While synthetic 3D scenes are easy to generate at scale, the depth maps obtained from them are too perfect compared to what real sensors deliver.

In my patent US20180182071A1, I introduced a method to bridge the gap between synthetic and real depth data by explicitly modeling and learning depth sensor noise, then applying this noise to artificially generated 3D scenes.

The core idea is simple but powerful:

instead of trying to make synthetic scenes more realistic, make synthetic depth maps behave like a real sensor.

Motivation: Why Synthetic Depth Data Fails in Practice

Depth sensors such as Time-of-Flight (ToF) or structured-light cameras are affected by many sources of noise:

- surface reflectance and material properties,

- multi-path interference,

- depth-dependent variance,

- sensor electronics and temperature,

- quantization and reconstruction artifacts.

Most synthetic pipelines ignore these effects and rely on idealized depth rendering (ray casting or z-buffering). Models trained on such data often fail when deployed on real sensors due to a domain gap.

The motivation behind my work was to explicitly model this gap and make synthetic depth data sensor-specific.

Core Idea of the Patented Approach

The patented method is based on learning a noise-adding function that maps:

- a noiseless depth map generated from a virtual 3D scene

to - a noisy depth map representative of a real physical sensor.

This function is learned from paired data:

- a noiseless depth map computed virtually for a given scene and sensor pose,

- the corresponding noisy depth map captured by a real depth sensor at the same pose.

Once learned, this noise model can be reused on arbitrary synthetic 3D scenes.

This enables large-scale generation of realistic depth data without repeated physical capture campaigns.

Synthetic 3D Scene Generation

The pipeline starts with artificially generated 3D scenes, which can include:

- object models,

- indoor or outdoor environments,

- controlled geometry and layout variations.

From these scenes:

- A virtual depth sensor is placed at a known pose

- A noiseless depth map is computed using software rendering or ray tracing

- This depth map represents the ideal measurement, free of sensor artifacts

At this stage, the data is geometrically correct — but not realistic.

Learning the Sensor Noise Model

The key contribution of the patent is the learning phase.

For a given real sensor:

- multiple scene configurations and sensor poses are used,

- each configuration produces a pair

(noiseless synthetic depth, real noisy depth).

From these pairs, a noise function is learned.

This function captures:

- depth-dependent noise behavior,

- sensor-specific distortions,

- systematic measurement errors.

The noise model can be represented as:

- a learned mapping function,

- or a data structure encoding noise statistics conditioned on depth and context.

Applying Noise to Synthetic Scenes

Once the noise model is learned, it can be applied to any new synthetic depth map:

- New 3D scenes can be generated arbitrarily

- Noiseless depth is rendered from them

- The learned noise function is applied

- The output depth map closely matches what the real sensor would produce

This decouples:

- scene creation

from - sensor behavior simulation

making the pipeline modular and scalable.

Why This Matters for Training and Evaluation

This approach enables:

- large-scale depth dataset generation,

- sensor-specific synthetic data,

- controlled variability in geometry and noise,

- improved generalization of depth-based models.

It is particularly valuable for:

- depth estimation networks,

- 3D reconstruction systems,

- SLAM and tracking,

- robotics and AR/VR perception stacks.

By simulating the sensor instead of the world, the method reduces the reality gap that typically limits synthetic training data.

Positioning the Contribution

This work sits at the intersection of:

- synthetic data generation,

- sensor modeling,

- learning-based simulation.

Rather than relying purely on physics-based simulators or purely data-driven models, the approach combines:

- explicit 3D scene control

- with learned sensor noise behavior

resulting in a practical, scalable solution for real-world depth sensing systems.

Closing Thoughts

Synthetic data is only useful if it behaves like real data.

With this patented approach, I proposed a way to make synthetic depth maps indistinguishable — statistically — from real sensor outputs, while retaining full control over the underlying 3D scenes.

As depth sensors continue to evolve, sensor-aware synthetic data generation will remain a critical tool for building robust and generalizable perception systems.