Designing and Implementing an Automotive Driver Monitoring System (DMS)

Published on September 04, 2020

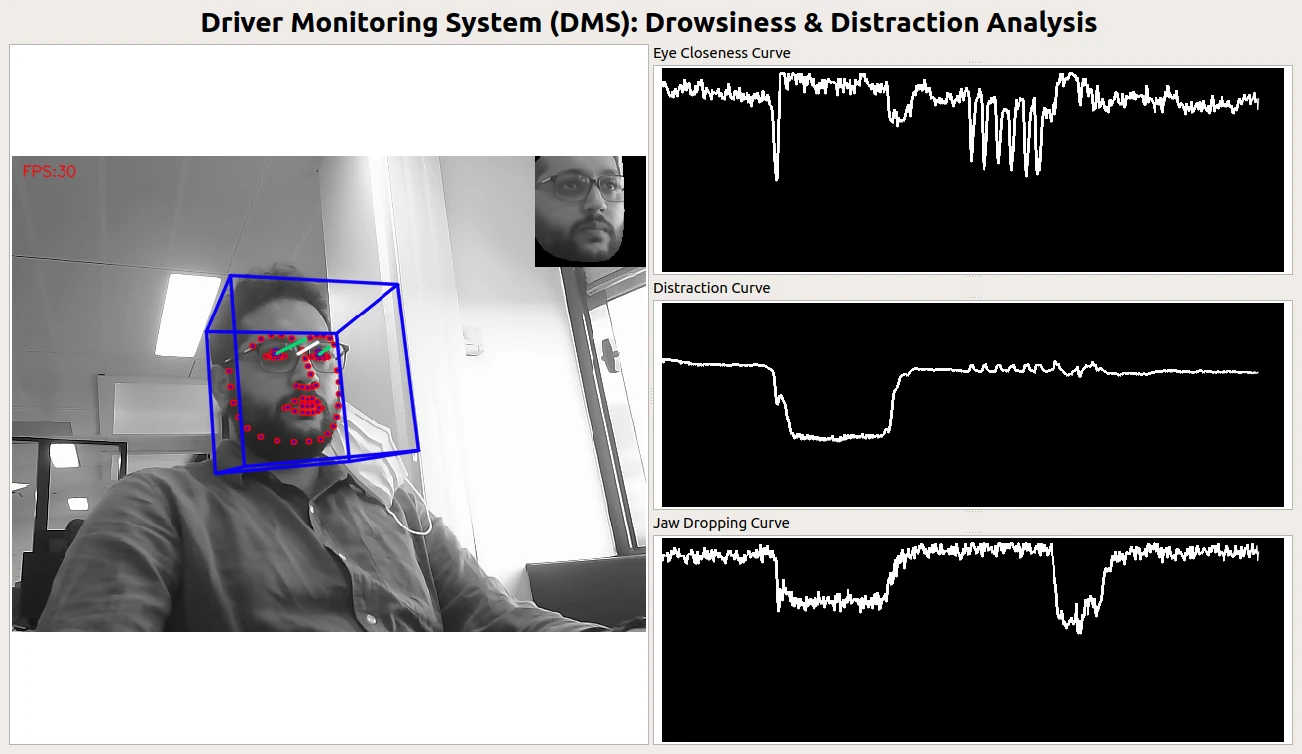

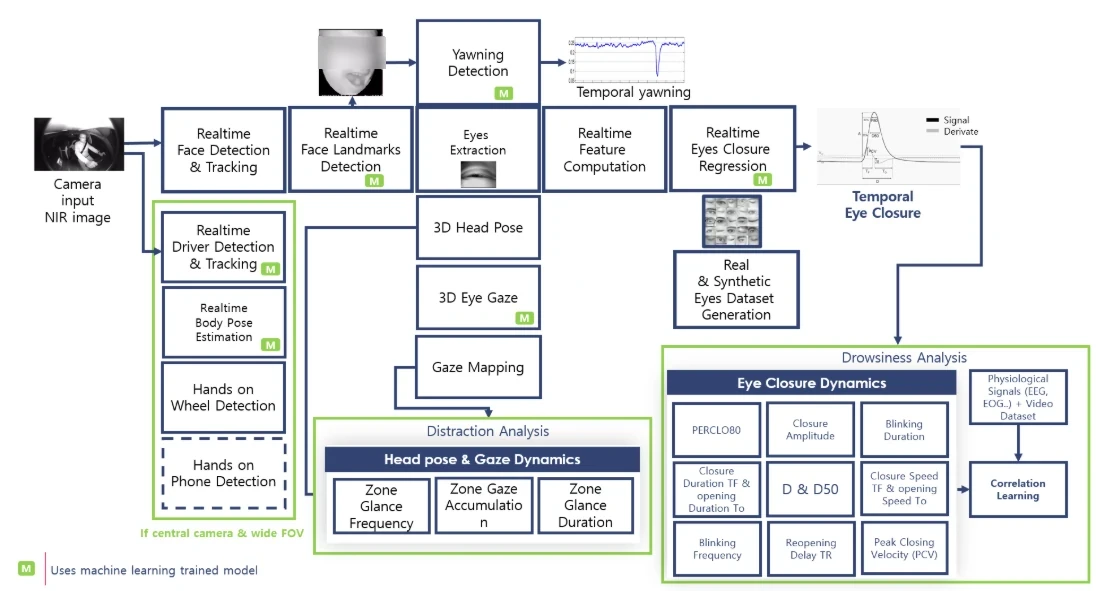

Driver Monitoring Systems (DMS) are often reduced to a single capability — detecting whether a driver is drowsy or distracted. In practice, a production-grade automotive DMS is a complex, multi-layer perception system, combining real-time computer vision, signal processing, temporal analysis, and machine learning.

This article describes the Driver Monitoring System I conceived and implemented, designed to operate on near-infrared (NIR) in-cabin cameras, under strict automotive constraints. The goal of the system is to detect driver drowsiness and distraction reliably and continuously, across lighting conditions, head poses, and real driving behavior.

The diagram above illustrates the full system pipeline. Below, I walk through each component and explain its role, interactions, and technical challenges.

1. Camera Input (NIR In-Cabin Imaging)

The system starts with a near-infrared (NIR) camera mounted inside the vehicle cabin.

NIR is used because it:

- works day and night,

- is robust to ambient lighting changes,

- is non-intrusive for the driver.

However, NIR images have:

- different texture statistics than RGB,

- strong reflections on glasses,

- lower contrast in some facial regions.

All downstream components must be designed with these constraints in mind.

2. Real-Time Face Detection and Tracking

The first perception stage performs real-time face detection and tracking.

Its responsibilities:

- locate the driver’s face in each frame,

- maintain temporal identity across frames,

- provide stable face regions for downstream processing.

This module uses a machine-learning-based detector, optimized for:

- NIR imagery,

- large head rotations,

- partial occlusions.

Tracking is essential to:

- reduce jitter,

- avoid re-detection costs,

- ensure temporal consistency.

3. Real-Time Face Landmark Detection

Once the face is detected, a facial landmark model estimates key points (eyes, nose, mouth, jaw).

This stage:

- feeds multiple downstream modules,

- must remain stable under motion,

- runs in real time on automotive hardware.

Landmarks are not an end goal — they are structural anchors used for geometry, gaze, eye analysis, and temporal reasoning.

This component relies on a deep learning model, trained specifically for in-cabin NIR conditions.

4. Eyes Extraction and Eye Closure Regression

From facial landmarks, the system extracts eye regions and performs eye-closure regression.

Instead of a binary open/closed decision, the system estimates:

- continuous eye-closure signals,

- frame-by-frame eye state confidence.

A machine-learning regression model outputs a smooth eye-closure signal, which is critical for:

- blink detection,

- micro-sleep analysis,

- temporal drowsiness metrics.

5. Temporal Eye Closure Analysis (Drowsiness)

Eye closure alone is insufficient. Drowsiness is a temporal phenomenon.

The system computes multiple time-based indicators, including:

- PERCLOS (e.g. PERCLOS80)

- blink frequency

- blink duration

- reopening delay

- closure and opening speeds

- peak closing velocity (PCV)

These features are computed over sliding windows and form the core of the drowsiness analysis module.

Some indicators are:

- rule-based,

- others learned or statistically modeled.

6. Yawning Detection

Yawning is another strong indicator of fatigue.

The system includes:

- mouth region analysis,

- temporal mouth opening dynamics,

- yawn duration and frequency tracking.

Yawning detection relies on machine-learning classifiers combined with temporal filtering to avoid false positives.

7. 3D Head Pose Estimation

Using facial landmarks, the system estimates 3D head pose:

- pitch,

- yaw,

- roll.

Head pose is essential for:

- understanding driver attention,

- supporting gaze estimation,

- contextualizing eye behavior.

This stage uses a geometric 3D model-based approach, not raw classification, ensuring interpretability and stability.

8. 3D Eye Gaze Estimation and Gaze Mapping

Beyond head pose, the system estimates 3D eye gaze direction.

This allows:

- mapping gaze into predefined zones (road, mirrors, dashboard, phone),

- computing gaze dynamics over time.

Gaze mapping translates raw gaze vectors into semantic attention zones, enabling higher-level distraction analysis.

9. Distraction Analysis (Head Pose & Gaze Dynamics)

Distraction is assessed using temporal gaze and head pose dynamics, including:

- zone glance frequency,

- glance duration,

- zone accumulation over time.

This module answers questions like:

- How often does the driver look away from the road?

- For how long?

- Is the behavior repetitive or prolonged?

Distraction analysis operates at a higher semantic level than raw perception.

10. Driver & Body Context Modules

Additional context modules include:

- driver detection and tracking,

- upper body pose estimation,

- hands on wheel detection,

- optional hands on phone detection (wide-FOV setups).

These components provide contextual signals that reinforce or invalidate drowsiness and distraction hypotheses.

Some of these modules rely on deep learning models, others on geometric reasoning.

11. Real and Synthetic Dataset Generation

A key aspect of the system is dataset generation, combining:

- real captured data,

- synthetic data (especially for eyes and gaze).

Synthetic data helps:

- cover rare cases,

- augment training distributions,

- reduce data collection costs.

This feeds both:

- model training,

- correlation learning between visual and physiological signals.

12. Correlation Learning and Decision Logic

At the top of the pipeline, signals from:

- eye dynamics,

- gaze,

- head pose,

- physiological indicators (if available)

are fused using correlation learning and decision logic.

The system is designed to:

- avoid single-signal decisions,

- favor consistent multi-cue evidence,

- degrade gracefully when some signals are unavailable.

Why DMS Is a System — Not a Model

A key takeaway from this work is that Driver Monitoring is not a single AI model.

It is a system of systems, combining:

- perception,

- geometry,

- temporal analysis,

- machine learning,

- domain knowledge.

Robust DMS performance comes from:

- careful system design,

- signal redundancy,

- temporal reasoning,

- hardware-aware optimization.

Closing Thoughts

The Driver Monitoring System described here was designed to operate under real automotive constraints:

- NIR imagery,

- real-time execution,

- embedded hardware,

- long-term stability.

Detecting drowsiness and distraction reliably requires far more than detecting a face or blinking eyes — it requires a coherent, multi-modal perception pipeline, where each component reinforces the others.

This complexity is not accidental.

It is the price of building systems that must work every drive, every night, for every driver.